The previous post about Data Models included a bit of a long winded discussion of why strict monolithic data models are not the alternative in the new paradigm. The main conclusion was that rather than focus on design of a strict data model for all of manufacturing lets step back and understand the problem that needs to be solved. The discussion is a bit theoretic and that is why I am compelled to go one level of detail deeper in an attempt to clarify some of the concepts.

What do we need to help us in the transformation journey to maturity, how can we achieve Visibility, Transparency, Predictive Capacity and Adaptability? We need to shift the thinking from "what is the correct data model?" to "what do we need to become predictive, and adaptable?". First of all we need more data, start digitizing your operation - the majority of the data we need is still on paper and diverse electronic documents and spreadsheets. Second, and this is the topic of this post, understand the informational elements of your operation and define a loose data dictionary that supports your digitization initiatives and citizen developers. With that and modern and emerging technologies for data analysis including AI/ML you will be able to gain the required insights and intelligence without a strict standardized monolithic relational data model. This will allow freedom within an organization for people to capture data without having to spend immense efforts in curating and micro managing how the data is stored and structured. Remember democratization and citizen development are a key enabler of digital transformation, their creative abilities with no-code technologies is the fastest way to digitize and instrument the operations. Get more digital data fast, its more important than how its structured and don't forget variety, multi media etc.

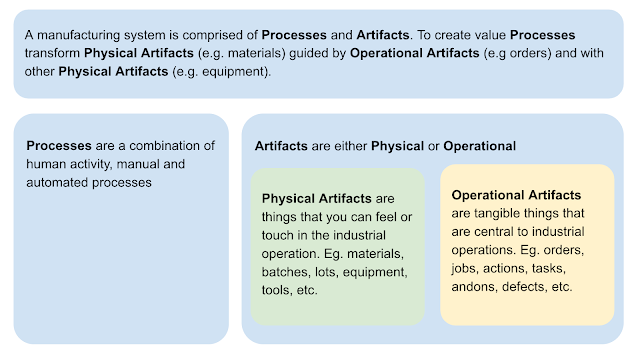

In my close to 30 years of studying the manufacturing domain it has become clear that there are just a few main and critical informational elements to a manufacturing operation. With that in mind I recommend an approach that uses generalization to help create transparency and interpretability but still allow for flexibility for specific use cases and varying degrees of complexity. The following generalization allows for a top down perspective into the complexity of a manufacturing operation.

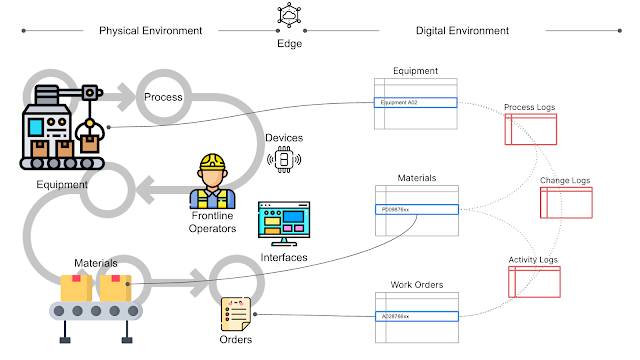

With this thinking, data about the artifacts represents the current status of each artifact, a single unique set of data (e.g. a row in a table). The processes that impact the artifact are captured in a historical record, a set of data for each significant transaction that transformed the state of the artifact (e.g. a running log). This results in a data set that represents real world artifact in a one to one relationship while everything that has happened to this artifact is captured in logs.

If you create simple templates that allow contextualization of data at the source based on these simple rules you can with modern analytics tools rapidly get the insights that you need to mature digitally. I find that it works for both human driven analytics, from charting and graphing in Excel to Tableau, Sigma or whatever tool you prefer. Taking this even further you can super charge that with AI/ML driven analytics. I urge you to try, the good and easy thing is that the effort to build and use something like this with modern operational platforms is minimal compared to a building and using a complex relational data model.

I also find that this model and generalization is a helpful tool to rapidly gain an understanding of a specific manufacturing operation. In fact I use it as a mental model when I do plant walk-thrus (Gemba walk) after which digital improvement ideas to observed operational challenges can be defined much faster and accurately. If you look closely many of the prevailing standards have the similar generalization but unfortunately have been overengineered past the point where they are practical.

Quickly understanding how a specific manufacturing system operates, from the machine to line and to the plant levels is the basis of digitization, its secret to gaining Visibility, Transparency, Predictive Capacity and Adaptability. Remember that is what we are after, the technology is just a means. If we can make it easier, more democratic, and adopted by the frontline masses then the network effect kicks-in and transformation happens faster, we gain productivity faster and we are well on our way to cross the digital divide.